Why Perplexity Is Stepping Back from the Model Context Protocol (MCP) Internally

In March 2026, during Perplexity's Ask 2026 developer conference, CTO Denis Yarats announced that the company is moving away from Anthropic's Model Context Protocol (MCP) for its internal systems and enterprise-facing operations. Instead, Perplexity is prioritizing traditional REST APIs and command-line interfaces (CLIs), while also rolling out new tools like their multi-model Agent API.

This shift doesn't mean abandoning MCP entirely. Perplexity continues to support it for certain use cases, such as enabling local desktop tools like Claude to access Perplexity's real-time search. However, for production-scale, mission-critical, and customer-facing workloads, the protocol no longer meets the company's reliability, efficiency, and security requirements.

What Is MCP and Why Was It Promising?

Introduced by Anthropic in late 2024, the Model Context Protocol is an open standard designed to standardize how AI agents and large language models interact with external tools, data sources, and services. It acts as a universal interface and often described as "USB-C for AI", allowing models to discover, describe, and call tools without custom integrations for each one. MCP servers typically run locally or remotely, communicating via transports like stdio or HTTP, and have powered integrations in tools such as Claude Desktop, Cursor, and VS Code.

Perplexity previously maintained an official MCP server to let users plug its search capabilities directly into compatible AI clients, and that support remains active.

The Key Reasons for the Internal Shift

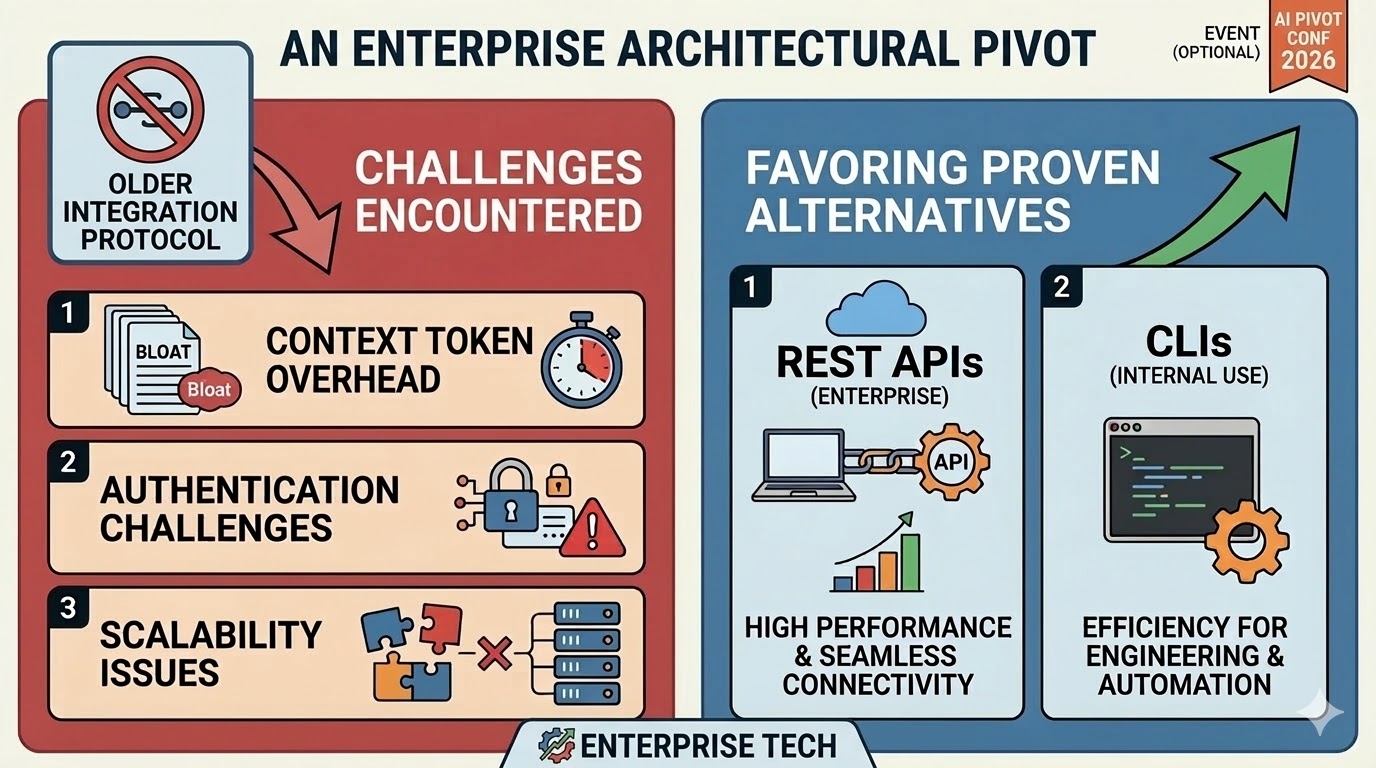

Perplexity's decision stems from practical challenges encountered when scaling MCP beyond lightweight or local development environments. The main issues include:

Context Window Overhead and Token Inefficiency

MCP interactions often require loading extensive tool schemas, descriptions, traces, and metadata into the model's context for every call. This leads to significant token consumption, sometimes adding tens of thousands of tokens per interaction—driving up costs and latency. In high-volume or complex agentic workflows, this "token bloat" becomes a major bottleneck.

Authentication and Security Friction

Enterprise clients demand robust features like OAuth flows, granular permissions, rate limiting, audit logs, and secure credential management. MCP lacks strong built-in support for these, making it difficult to enforce enterprise-grade security across multiple tool servers. Traditional REST APIs, with decades of mature tooling for authentication and observability, handle these requirements far more reliably.

Reliability and Performance at Scale

The protocol's default transports (especially stdio) work well for single-machine, local setups but introduce unpredictability, higher latency, and monitoring difficulties in distributed or production environments. Agent behavior can become non-deterministic when models decide tool usage, leading to inconsistent outcomes compared to the deterministic nature of direct API or CLI calls.

These limitations make MCP ideal for rapid prototyping, local dev tooling, and niche integrations but less suitable for anything requiring production stability, cost efficiency, or strict compliance.

What Perplexity Is Using Instead

For internal operations and larger clients, the company has returned to battle-tested REST APIs, which excel in security, scalability, and predictability. They've complemented this with CLIs for streamlined execution in certain workflows.

At the same conference, Perplexity launched an expanded API platform, including:

Agent API: A managed runtime for agentic workflows with built-in orchestration, retrieval, tool execution, and multi-model routing (supporting models from OpenAI, Anthropic, Google, xAI, NVIDIA, and more).

Supporting APIs for embeddings, sandboxed code execution, and search.

These tools provide the flexibility of agent-like behavior (multi-step reasoning, tool use) without the protocol overhead, positioning APIs as the foundational layer rather than something wrapped by MCP.

The Broader Implications

Perplexity's move highlights a maturing phase in agentic AI: early hype around universal protocols like MCP gave way to real-world engineering trade-offs. While MCP remains valuable for lightweight, local, or open-ecosystem scenarios, production demands, especially in enterprise settings favor simpler, more controllable primitives like APIs and CLIs.

The protocol isn't "dead"; its maintainers continue evolving it (with roadmaps addressing production concerns), and it retains utility in specific niches. But Perplexity's high-profile shift sends a clear signal: for anything important—scale, security, cost, reliability. The established stack still wins.

In short, this isn't rejection of innovation. It's prioritization of what works at Perplexity's level of ambition and responsibility to enterprise users.

Get new posts by Nelson Lin

Subscribe to receive email notifications when a new article is published.