Harness Engineering: The Discipline That Makes AI Agents Production-Ready

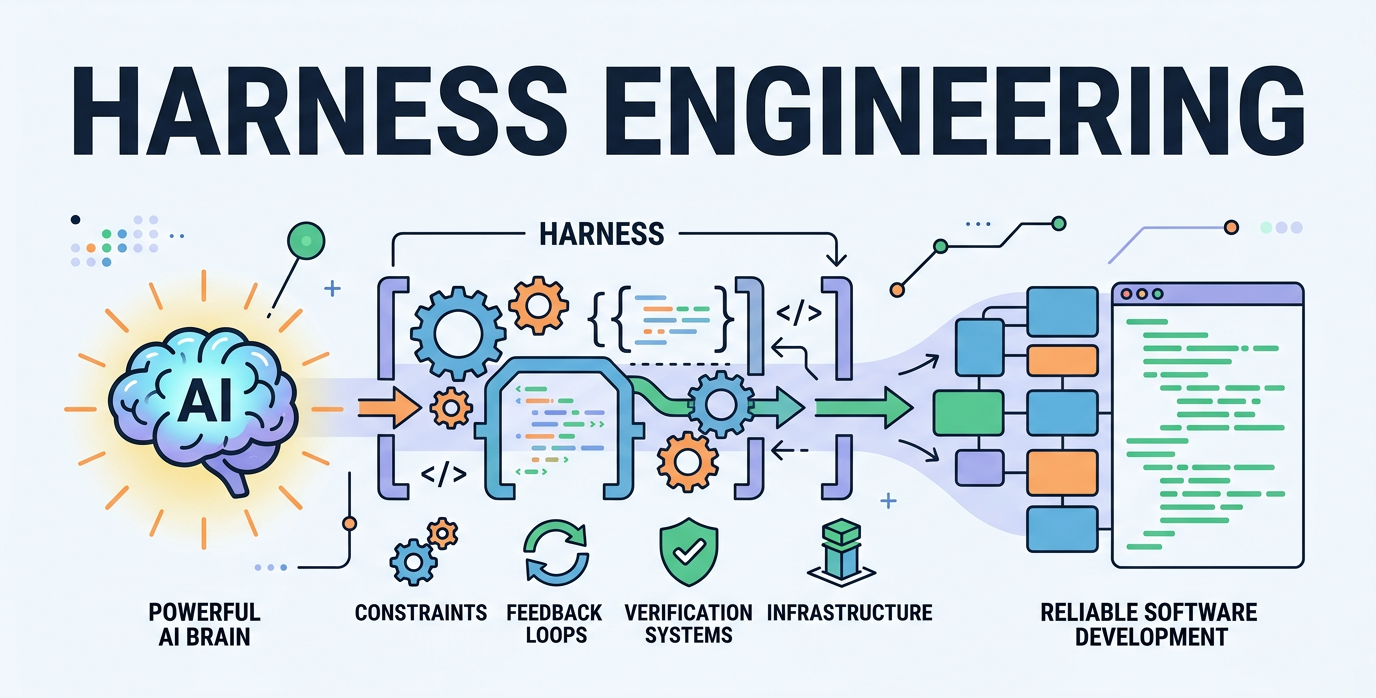

As AI agents like OpenAI's Codex and Anthropic's Claude write millions of lines of code autonomously, traditional software engineering is evolving. The critical skill isn't prompting models better. It's engineering the "harness" around them.

What Is Harness Engineering?

Harness Engineering is the emerging discipline of designing constraints, tools, feedback loops, documentation, and verification systems that guide powerful but unpredictable AI agents to produce reliable, maintainable, and scalable software outputs. Humans specify intent and boundaries; agents execute within them. This shifts engineering from writing code to architecting environments where AI can operate safely at scale.

Coined prominently in late 2025 discussions (e.g., Mitchell Hashimoto's writings) and formalized in 2026 by OpenAI's internal experiments, Harness Engineering addresses the core limitation of pure context engineering: agents still drift, accumulate "entropy" (cruft), or fail in long-running tasks without structured guardrails.

Why Harness Engineering Matters More Than Model Size in 2026 ?

In early 2026, OpenAI built and shipped an internal product beta with over 1 million lines of code—zero lines manually written by humans, using Codex agents under a strict "no manual code" constraint. This forced the creation of a robust harness that increased engineering velocity by orders of magnitude.

Similar patterns appear at Anthropic, Stripe, and emerging agent companies: when agents make mistakes, engineers don't just fix the output. They engineer the system so the mistake never recurs. As Martin Fowler noted in February 2026, this mixes deterministic rules (linting, module boundaries) with LLM-based checks to keep agents aligned.

Without a harness, agents excel in demos but collapse in production. With one, they handle complex, long-chain workflows reliably.

Key Components of a Modern Harness (2026 Best Practices)

Architectural Constraints and Boundaries

Enforce module boundaries, stable data structures, and domain-specific rules via machine-readable artifacts (e.g., architecture decision records, schema validators). OpenAI emphasizes central enforcement of boundaries while allowing local autonomy—mirroring large platform teams.

Feedback Loops and Observability

Build closed-loop systems: trace failures, cluster error patterns, and feed corrections back into the harness. LangChain's Deep Agents team fixed GPT-5.2-Codex on Terminal Bench 2.0, boosting scores from 52.8% to 66.5% (Top 30 to Top 5) by tracing and harnessing failures without model changes.

Verification and Guardrails

Combine deterministic tools (linters, type checkers, unit test generators) with AI-driven validation (e.g., agents reviewing other agents' code). Include "garbage collection" for entropy—periodic refactoring agents that clean cruft.

Lifecycle Management and Scaffolding

Standardize repository setup, CI/CD, documentation, and observability generation. OpenAI started with Codex CLI scaffolds and evolved to full declarative prompts for workflows.

How to Build Your First Harness: Step-by-Step (Practical 2026 Guide)

Start with a Forcing Function: Adopt "no manual code" for a greenfield module or refactor to force harness investment.

Define Intent Declaratively: Use high-level specs, ADRs, and schemas instead of low-level instructions.

Instrument for Traceability: Log every agent step; analyze clusters of failures.

Engineer Corrections Permanently — Turn one-off fixes into reusable constraints (e.g., new lint rules or sub-agents).

Iterate with Hybrid Checks: Mix rule-based and LLM verifiers for speed and accuracy.

Monitor Entropy: Schedule "refactor agents" to prevent drift in long-running projects.

Teams following this see 2–5× reliability gains in agentic workflows, per 2026 case studies from OpenAI and independent benchmarks.

Comparison: Harness Engineering vs. Earlier Paradigms

Paradigm | Focus | Strengths | Limitations in 2026 Production | When It Breaks |

|---|---|---|---|---|

Prompt Engineering | Single-turn instructions | Quick for demos | No state, no tools, high hallucination | Multi-step tasks |

Context Engineering | What the agent "sees" (RAG, memory) | Reduces hallucinations short-term | Token limits, attention decay, business gaps | Long chains, complex domains |

Harness Engineering | How the agent "runs" (environment + loops) | Systemic reliability, no model changes needed | Requires upfront investment | N/A — designed for production drift |

Harness Engineering builds on the prior two but shifts to execution optimization, making it the dominant paradigm for agentic software in 2026.

Get new posts by Nelson Lin

Subscribe to receive email notifications when a new article is published.