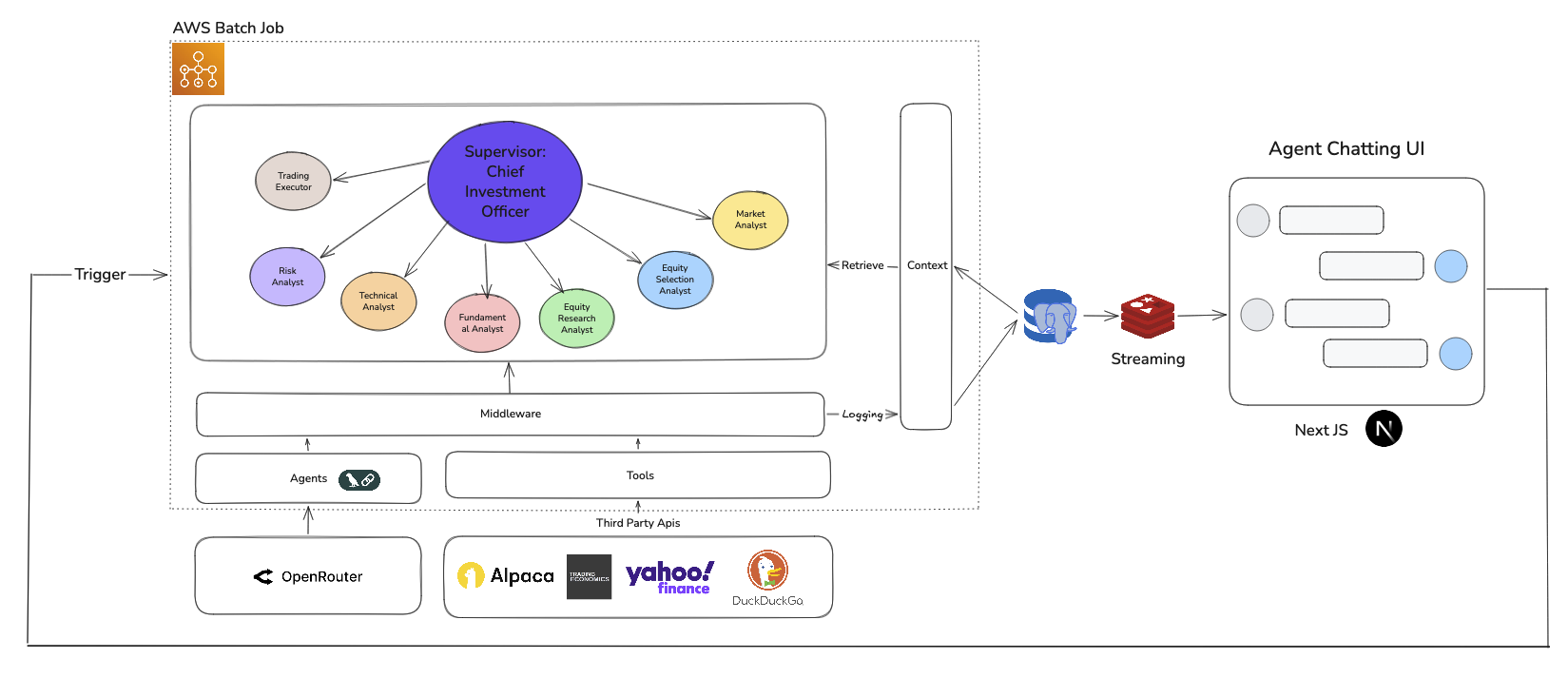

A Multi-Agent Architecture for Autonomous AI Trading

Discover the scalable, observable architecture powering sandx.ai – autonomous AI agents collaborating like a hedge fund. Explore 6 foundational decisions for AI trading success: hierarchical agents, LangChain middleware, Prisma context, Alpaca data, Redis streaming, and AWS Batch scaling.

1. Supervisor-Subagent Collaboration: Hierarchical Orchestration for Robust Trading Decisions

Why Hierarchical Agents Beat Single-Agent Systems

sandx.ai deploys a supervisor-subordinate topology mimicking investment committees: 1 Chief Investment Officer (CIO) agent orchestrates 7 specialist analysts (Technical, Fundamental, Sentiment, Risk, etc.).

The CIO decomposes tasks, routes to experts, and synthesizes outputs via multi-agent voting – resolving conflicts for superior signal quality over monolithic agents.

"Single agents hallucinate. Teams deliberate." – sandx.ai architecture principle

2. LangChain v1 Middleware: Session Restore & Live Streaming Logging

Custom Middleware for Production Observability

LangChain v1.0 middleware intercepts every agent step for auto-logging to database – enabling live chat streaming and session restore.

Tracking agent behavior with logging, analytics, and debugging.

Key benefits: debugging at scale, user session continuity, real-time monitoring without performance overhead.

Langraph Logging Middleware For Session Restore and Streaming

3. Prisma ORM Context Management: Type-Safe AI Memory

Why Prisma Powers Reliable Multi-Agent Context

Trading agents need consistent context: portfolios, strategies, histories. Prisma delivers:

Prisma ORM make our context Type-Safe, Productive, Query-Powerful.

Result: Zero-downtime schema evolution + query power without SQL complexity.

Compile-time validation: Prisma's generated types catch invalid field access, missing relations, or schema mismatches during development—not in production trading.

Safe refactoring: Catch error early before deploy to the production.

Schema as single source of truth: Context model changes automatically propagate type updates, preventing regressions when evolving agent logic

Type Hints: Accelerate Development

IDE autocomplete for context fields and relations reduces lookup time and speeds iteration.

Avoid writing complex SQL: With layer of Prisma ORM, writing better query without digging into complex SQL.

Simple CRUD: Efficient Context Lifecycle

Atomic updates: Trading requires atomic updates when buy or sell stocks.

Nested operations: Prisma handles relational writes seamlessly—persisting complex agent workflows without boilerplate.

4. Alpaca Market Data: Institutional-Grade Real-Time Feeds

10K RPM Limits Enable Agent Parallelism

Single-source truth eliminates data silos – all agents reason from identical market reality.

Integrating Alpaca, sandx.ai agents operate on consistent, low-latency, institutional-grade data, turning market complexity into actionable intelligence.

5. Redis Multi-Region: Sub-Second Global UI Updates

Simple Polling + Geo-Replication = Responsive UX

Agents → Redis → Frontend polling (1-2s intervals). Multi-region replication ensures <100ms latency worldwide.

Multi-Region Replication for Global Low Latency

Regional Redis Replicas: Agent messages are stored in Redis instances replicated across multiple geographic regions. Users connect to their nearest replica, minimizing network round-trip time.

Automatic Failover: If a regional endpoint experiences issues, traffic routes to the next closest replica—ensuring consistent UI responsiveness worldwide.

Simple Polling, High Performance

No WebSockets complexity, just Redis performance.

Stream Buffering: Protecting the Database

Write-Ahead Buffer: Agents write messages to Redis first; a background worker asynchronously persists to Prisma/PostgreSQL. This decouples real-time delivery from durable storage.

Reduced DB Load: High-frequency agent thoughts, tool calls, and status updates hit Redis, not the primary database, preventing query contention during active trading sessions.

6. AWS Batch: Pay-Per-Job Agent Execution

Perfect for I/O-Bound AI Workloads

Agents spend 90% time waiting (LLMs, APIs, DB). AWS Batch:

I/O-Bound vs. CPU-Intensive

Waiting, Not Computing: Agents spend most cycles waiting on LLM responses, third-party API calls (Alpaca, OpenRouter), and database queries—not performing heavy local computation.

Idle Resource Waste: Traditional always-on servers or oversized containers pay for CPU cycles that sit idle during these wait periods.

Operational Efficiency

Event-Driven Scaling: Jobs queue and execute only when triggered (market events, user requests), scaling to zero during idle periods.

Managed Infrastructure: No Kubernetes clusters or EC2 fleets to manage—AWS Batch handles provisioning, scheduling, and termination automatically

Event Driven & Job Queuing

Automatic Queue Management: When triggered, jobs enter a managed queue. AWS Batch holds them there until the allocated compute resources become available.

On-Demand Invocation: The AWS Batch SDK allows the backend to instantly trigger agent jobs with specific parameters (e.g., ticker=AAPL) in response to real-time events like cron schedule, or user interactions.

Choosing agent-favor and event-driven solutions with job queuing solution allows sandx.ai to built a scalable and low cost agent-runtime solution for heavy multi-agent workflows.

Architecture = Alpha: Why sandx.ai Wins

Powerful models alone fail. sandx.ai succeeds through purpose-built infrastructure:

Intelligent orchestration that mirrors human expertise structures

Observable middleware that builds trust through transparency

Robust context management that ensures decision consistency

Real-time communication that delivers interactive user experiences

Reliable market data that grounds AI reasoning in reality

Cost-aware compute that scales with workload demands

By thoughtfully integrating these six pillars, we've built a platform where AI agents don't just trade, they reason, collaborate, and evolve. The result is a system that combines the speed of algorithms with the wisdom of structured decision-making.

.